docs: create docs workspace (#5174)

* docs: migrate ui docs to docs universe * created yarn workspace * added eslint and tsconfig configurations * fix eslint configurations * fixed eslint configurations * shared tailwind configurations * added shared ui package * added more shared components * migrating more components * made details components shared * move InlineCode component * moved InputText * moved Loading component * Moved Modal component * moved Select components * Moved Tooltip component * moved Search components * moved ColorMode provider * Moved Notification components and providers * used icons package * use UI colors in api-reference * moved Navbar component * used Navbar and Search in UI docs * added Feedback to UI docs * general enhancements * fix color mode * added copy colors file from ui-preset * added features and enhancements to UI docs * move Sidebar component and provider * general fixes and preparations for deployment * update docusaurus version * adjusted versions * fix output directory * remove rootDirectory property * fix yarn.lock * moved code component * added vale for all docs MD and MDX * fix tests * fix vale error * fix deployment errors * change ignore commands * add output directory * fix docs test * general fixes * content fixes * fix announcement script * added changeset * fix vale checks * added nofilter option * fix vale error

This commit is contained in:

476

www/apps/docs/content/development/backend/configurations.md

Normal file

476

www/apps/docs/content/development/backend/configurations.md

Normal file

@@ -0,0 +1,476 @@

|

||||

---

|

||||

description: 'Learn about the different configurations available in a Medusa backend. This includes configurations related to the database, CORS, plugins, and more.'

|

||||

---

|

||||

|

||||

# Configure Medusa Backend

|

||||

|

||||

This document provides a reference of all accepted Medusa configurations in `medusa-config.js`.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

This document assumes you already followed along with the [Prepare Environment documentation](./prepare-environment.mdx) and have [installed a Medusa backend](./install.mdx#create-a-medusa-backend).

|

||||

|

||||

---

|

||||

|

||||

## Medusa Configurations File

|

||||

|

||||

The configurations for your Medusa backend are in `medusa-config.js` located in the root of your Medusa project. The configurations include database, modules, and plugin configurations, among other configurations.

|

||||

|

||||

`medusa-config.js` exports an object having the following properties:

|

||||

|

||||

| Property Name | Description | Required |

|

||||

| --- | --- | --- |

|

||||

| [projectConfig](#projectconfig) | An object that holds general configurations related to the Medusa backend, such as database or CORS configurations. | Yes |

|

||||

| [plugins](#plugins) | An array of plugin configurations that defines what plugins are installed and optionally specifies each of their configurations. | No |

|

||||

| [modules](#modules) | An object that defines what modules are installed and optionally specifies each of their configurations. | No |

|

||||

| [featureFlags](#featureflags) | An object that enables or disables features guarded by a feature flag. | No |

|

||||

|

||||

For example:

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig,

|

||||

plugins,

|

||||

modules,

|

||||

featureFlags,

|

||||

}

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Environment Variables

|

||||

|

||||

Many of the configurations mentioned in this guide are recommended to have their values set in environment variables and referenced within `medusa-config.js`.

|

||||

|

||||

During development, you can set your environment variables in the `.env` file at the root of your Medusa backend project. In production, setting the environment variables depends on the hosting provider.

|

||||

|

||||

---

|

||||

|

||||

## projectConfig

|

||||

|

||||

This section includes all configurations that belong to the `projectConfig` property in the configuration object exported by `medusa-config.js`. The `projectConfig` property is an object, and each of the configurations in this section is added to this object as key-value pairs.

|

||||

|

||||

### admin_cors and store_cors

|

||||

|

||||

The Medusa backend’s endpoints are protected by Cross-Origin Resource Sharing (CORS). So, only allowed URLs or URLs matching a specified pattern can send requests to the backend’s endpoints.

|

||||

|

||||

`admin_cors` is used to specify the accepted URLs or patterns for admin endpoints, and `store_cors` is used to specify the accepted URLs or patterns for store endpoints.

|

||||

|

||||

For both the `admin_cors` and `store_cors`, the value is expected to be a string. This string can be a comma-separated list of accepted origins. Every origin in that list can be of the following types:

|

||||

|

||||

1. A URL. For example, `http://localhost:8000`. The URL shouldn’t end with a backslash.

|

||||

2. A regular expression pattern that can match more than one origin. For example, `.example.com`. The regex pattern that the backend tests for is `^([\/~@;%#'])(.*?)\1([gimsuy]*)$`.

|

||||

|

||||

Here are some examples of common use cases:

|

||||

|

||||

```bash

|

||||

# Allow different ports locally starting with 700

|

||||

ADMIN_CORS=/http:\/\/localhost:700\d+$/

|

||||

|

||||

# Allow any origin ending with vercel.app. For example, storefront.vercel.app

|

||||

STORE_CORS=/vercel\.app$/

|

||||

|

||||

# Allow all HTTP requests

|

||||

ADMIN_CORS=/http:\/\/*/

|

||||

```

|

||||

|

||||

Typically, the value of these configurations would be set in an environment variable and referenced in `medusa-config.js`:

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

admin_cors: process.env.ADMIN_CORS,

|

||||

store_cors: process.env.STORE_CORS,

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

If you’re adding the value directly within `medusa-config.js`, make sure to add an extra escaping `/` for every backslash in the pattern. For example:

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

admin_cors: "/http:\\/\\/localhost:700\\d+$/",

|

||||

store_cors: "/vercel\\.app$/",

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### cookie_secret

|

||||

|

||||

A string that is used to create cookie tokens. Although this configuration option is not required, it’s highly recommended to set it for better security. It’s also recommended to generate a random string.

|

||||

|

||||

In a development environment, if this option is not set the default secret is `supersecret` However, in production, if this configuration is not set an error will be thrown and your backend will crash.

|

||||

|

||||

Typically, the value of this configuration would be set in an environment variable and referenced in `medusa-config.js`.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

cookie_secret: process.env.COOKIE_SECRET,

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### http_compression

|

||||

|

||||

This configuration enables HTTP compression from the application layer. If you have access to the HTTP server, the recommended approach would be to enable it there. However, some platforms don't offer access to the HTTP layer and in those cases, this is a good alternative.

|

||||

|

||||

Its value is an object that has the following properties:

|

||||

|

||||

- `enabled`: A boolean flag that indicates whether HTTP compression is enabled. It is disabled by default.

|

||||

- `level`: A `number` value that indicates the level of zlib compression to apply to responses. A higher level will result in better compression but will take longer to complete. A lower level will result in less compression but will be much faster. The default value is 6.

|

||||

- `memLevel`: A `number` value that specifies how much memory should be allocated to the internal compression state. It's an integer in the range of 1 (minimum level) and 9 (maximum level). The default value is `8`.

|

||||

- `threshold`: A `number` or a `string` value in bytes that specifies the minimum response body size that compression is applied on. This is the number of bytes or any string accepted by the bytes module. The default value is `1024`.

|

||||

|

||||

If you enable HTTP compression and you want to disable it for specific endpoints, you can pass in the request header `"x-no-compression": true`.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

http_compression: {

|

||||

enabled: true,

|

||||

level: 6,

|

||||

memLevel: 8,

|

||||

threshold: 1024,

|

||||

},

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### jwt_secret

|

||||

|

||||

A string that is used to create authentication tokens. Although this configuration option is not required, it’s highly recommended to set it for better security. It’s also recommended to generate a random string.

|

||||

|

||||

In a development environment, if this option is not set the default secret is `supersecret` However, in production, if this configuration is not set an error will be thrown and your backend will crash.

|

||||

|

||||

Typically, the value of this configuration would be set in an environment variable and referenced in `medusa-config.js`.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

jwt_secret: process.env.JWT_SECRET,

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### database_database

|

||||

|

||||

The name of the database to connect to. If provided in `database_url`, then it’s not necessary to include it.

|

||||

|

||||

Make sure to create the PostgreSQL database before using it. You can check how to create a database in [PostgreSQL's documentation](https://www.postgresql.org/docs/current/sql-createdatabase.html).

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

database_database: "medusa-store",

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### database_extra

|

||||

|

||||

An object that includes additional configurations to pass to the database connection. You can pass any configuration. One defined configuration to pass is `ssl` which enables support for TLS/SSL connections.

|

||||

|

||||

This is useful for production databases, which can be supported by setting the `rejectUnauthorized` attribute of `ssl` object to `false`. During development, it’s recommended not to pass this option.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

database_extra:

|

||||

process.env.NODE_ENV !== "development"

|

||||

? { ssl: { rejectUnauthorized: false } }

|

||||

: {},

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### database_logging

|

||||

|

||||

This configuration specifies what messages to log. Its value can be one of the following:

|

||||

|

||||

- (default) A boolean value that indicates whether any messages should be logged or not. By default, if no value is provided, the value will be `false`.

|

||||

- The string value `all` that indicates all types of messages should be logged.

|

||||

- An array of log-level strings to indicate which type of messages to show in the logs. The strings can be `query`, `schema`, `error`, `warn`, `info`, `log`, or `migration`. Refer to [Typeorm’s documentation](https://typeorm.io/logging#logging-options) for more details on what each of these values means.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

database_logging: [

|

||||

"query", "error",

|

||||

],

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### database_schema

|

||||

|

||||

A string indicating the database schema to connect to. This is not necessary to provide if you’re using the default schema, which is `public`.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

database_schema: "custom",

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### database_type

|

||||

|

||||

A string indicating the type of database to connect to. At the moment, only `postgres` is accepted, which is also the default value.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

database_type: "postgres",

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### database_url

|

||||

|

||||

A string indicating the connection URL of the database. Typically, the connection URL would be set in an environment variable, and the variable would be referenced in `medusa-config.js`.

|

||||

|

||||

The format of the connection URL for PostgreSQL is:

|

||||

|

||||

```bash

|

||||

postgres://[user][:password]@[host][:port]/[dbname]

|

||||

```

|

||||

|

||||

Where:

|

||||

|

||||

- `[user]`: (required) your PostgreSQL username. If not specified, the system's username is used by default. The database user that you use must have create privileges. If you're using the `postgres` superuser, then it should have these privileges by default. Otherwise, make sure to grant your user create privileges. You can learn how to do that in [PostgreSQL's documentation](https://www.postgresql.org/docs/current/ddl-priv.html).

|

||||

- `[:password]`: an optional password for the user. When provided, make sure to put `:` before the password.

|

||||

- `[host]`: (required) your PostgreSQL host. When run locally, it should be `localhost`.

|

||||

- `[:post]`: an optional port that the PostgreSQL server is listening on. By default, it's `5432`. When provided, make sure to put `:` before the port.

|

||||

- `[dbname]`: (required) the name of the database.

|

||||

|

||||

For example, you can set the following database URL in your environment variables:

|

||||

|

||||

```bash

|

||||

DATABASE_URL=postgres://postgres@localhost/medusa-store

|

||||

```

|

||||

|

||||

You can learn more about the connection URL format in [PostgreSQL’s documentation](https://www.postgresql.org/docs/current/libpq-connect.html).

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

database_url: process.env.DATABASE_URL,

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### redis_url

|

||||

|

||||

This configuration is used to specify the URL to connect to Redis. This is only used for scheduled jobs. If you omit this configuration, scheduled jobs will not work.

|

||||

|

||||

:::note

|

||||

|

||||

You must first have Redis installed. You can refer to [Redis's installation guide](https://redis.io/docs/getting-started/installation/).

|

||||

|

||||

:::

|

||||

|

||||

The Redis connection URL has the following format:

|

||||

|

||||

```bash

|

||||

redis[s]://[[username][:password]@][host][:port][/db-number]

|

||||

```

|

||||

|

||||

For a local Redis installation, the connection URL should be `redis://localhost:6379` unless you’ve made any changes to the Redis configuration during installation.

|

||||

|

||||

Typically, the value would be added as an environment variable and referenced in `medusa-config.js`.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

redis_url: process.env.REDIS_URL,

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### redis_prefix

|

||||

|

||||

The prefix set on all keys stored in Redis. The default value is `sess:`. If this configuration option is provided, it is prepended to `sess:`.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

redis_prefix: "medusa:",

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### redis_options

|

||||

|

||||

An object of options to pass ioredis. You can refer to [ioredis’s RedisOptions documentation](https://redis.github.io/ioredis/index.html#RedisOptions) for the list of available options.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

redis_options: {

|

||||

connectionName: "medusa",

|

||||

},

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

### session_options

|

||||

|

||||

An object of options to pass to `express-session`. The object can have the following properties:

|

||||

|

||||

- `name`: A string indicating the name of the session ID cookie to set in the response (and read from in the request). The default value is `connect.sid`. Refer to [express-session’s documentation](https://www.npmjs.com/package/express-session#name) for more details.

|

||||

- `resave`: A boolean value that indicates whether the session should be saved back to the session store, even if the session was never modified during the request. The default value is `true`. Refer to [express-session’s documentation](https://www.npmjs.com/package/express-session#resave) for more details.

|

||||

- `rolling`: A boolean value that indicates whether the session identifier cookie should be force-set on every response. The default value is `false`. Refer to [express-session’s documentation](https://www.npmjs.com/package/express-session#rolling) for more details.

|

||||

- `saveUninitialized`: A boolean value that indicates whether a session that is "uninitialized" is forced to be saved to the store. The default value is `true`. Refer to [express-session’s documentation](https://www.npmjs.com/package/express-session#saveUninitialized) for more details.

|

||||

- `secret`: A string that indicates the secret to sign the session ID cookie. By default, the value of [cookie_secret](#cookie_secret) will be used. Refer to [express-session’s documentation](https://www.npmjs.com/package/express-session#secret) for details.

|

||||

- `ttl`: A number is used when calculating the `Expires` `Set-Cookie` attribute of cookies. By default, it’ll be `10 * 60 * 60 * 1000`. Refer to [express-session’s documentation](https://www.npmjs.com/package/express-session#cookiemaxage) for details.

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

projectConfig: {

|

||||

session_options: {

|

||||

name: "custom",

|

||||

},

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## plugins

|

||||

|

||||

On your Medusa backend, you can use Plugins to add custom features or integrate third-party services. For example, installing a plugin to use Stripe as a payment processor.

|

||||

|

||||

Aside from installing the plugin with NPM, you need to pass the plugin you installed into the `plugins` array defined in `medusa-config.js`.

|

||||

|

||||

The items in the array can either be:

|

||||

|

||||

- A string, which is the name of the plugin to add. You can pass a plugin as a string if it doesn’t require any configurations.

|

||||

- An object having the following properties:

|

||||

- `resolve`: A string indicating the name of the plugin.

|

||||

- `options`: An object that includes the plugin’s options. These options vary for each plugin, and you should refer to the plugin’s documentation for details on them.

|

||||

|

||||

For example:

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

plugins: [

|

||||

`medusa-my-plugin-1`,

|

||||

{

|

||||

resolve: `medusa-my-plugin`,

|

||||

options: {

|

||||

apiKey: `test`, // or use env variables

|

||||

},

|

||||

},

|

||||

// ...

|

||||

],

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

You can refer to the [Plugins Overview documentation](../../plugins/overview.mdx) for a list of available official plugins.

|

||||

|

||||

---

|

||||

|

||||

## modules

|

||||

|

||||

In Medusa, commerce and core logic are modularized to allow developers to extend or replace certain modules with custom implementations.

|

||||

|

||||

Aside from installing the module with NPM, you need to add it to the exported object in `medusa-config.js`.

|

||||

|

||||

The keys of the `modules` configuration object refer to the type of module. Its value can be one of the following:

|

||||

|

||||

1. A boolean value that indicates whether the module type is enabled.

|

||||

2. A string value that indicates the name of the module to be used for the module type. This can be used if the module does not require any options.

|

||||

3. An object having the following properties, but typically you would mainly use the `resolve` and `options` properties only:

|

||||

1. `resolve`: a string indicating the name of the module.

|

||||

2. `options`: an object indicating the options to pass to the module. These options vary for each module, and you should refer to the module’s documentation for details on them.

|

||||

3. `resources`: a string indicating whether the module shares the dependency container with the Medusa core. Its value can either be `shared` or `isolated`. Refer to the [Modules documentation](../modules/create.mdx#module-scope) for more details.

|

||||

4. `alias`: a string indicating a unique alias to register the module under. Other modules can’t use the same alias.

|

||||

5. `main`: a boolean value indicating whether this module is the main registered module. This is useful when an alias is used.

|

||||

|

||||

For example:

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

modules: {

|

||||

eventBus: {

|

||||

resolve: "@medusajs/event-bus-local",

|

||||

},

|

||||

cacheService: {

|

||||

resolve: "@medusajs/cache-redis",

|

||||

options: {

|

||||

redisUrl: process.env.CACHE_REDIS_URL,

|

||||

ttl: 30,

|

||||

},

|

||||

},

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

Learn more about [Modules and how to create and use them](../modules/overview.mdx).

|

||||

|

||||

---

|

||||

|

||||

## featureFlags

|

||||

|

||||

Some features in the Medusa backend are guarded by a feature flag. This ensures constant shipping of new features while maintaining the engine’s stability.

|

||||

|

||||

You can specify whether a feature should or shouldn’t be used in your backend by enabling its feature flag. Feature flags can be enabled through environment variables or through this configuration exported in `medusa-config.js`. If you want to use the environment variables method, learn more about it in the [Feature Flags documentation](../feature-flags/toggle.md#method-one-using-environment-variables).

|

||||

|

||||

The `featureFlags` configuration is an object. Its properties are the names of the different feature flags. Each property’s value is a boolean indicating whether the feature flag is enabled.

|

||||

|

||||

You can find available feature flags and their key name [here](https://github.com/medusajs/medusa/tree/master/packages/medusa/src/loaders/feature-flags).

|

||||

|

||||

For example:

|

||||

|

||||

```js title=medusa-config.js

|

||||

module.exports = {

|

||||

featureFlags: {

|

||||

product_categories: true,

|

||||

// ...

|

||||

},

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

Learn more about [Feature flags and how to toggle them](../feature-flags/overview.mdx).

|

||||

|

||||

:::note

|

||||

|

||||

After enabling a feature flag, make sure to [run migrations](../entities/migrations/overview.mdx#migrate-command) as it may require making changes to the database.

|

||||

|

||||

:::

|

||||

177

www/apps/docs/content/development/backend/directory-structure.md

Normal file

177

www/apps/docs/content/development/backend/directory-structure.md

Normal file

@@ -0,0 +1,177 @@

|

||||

---

|

||||

description: "In this document, you’ll learn about the directory structure of a Medusa backend. It’ll help you understand the purpose of each file and folder in your Medusa backend project."

|

||||

---

|

||||

|

||||

# Medusa Backend Directory Structure

|

||||

|

||||

In this document, you’ll learn about the directory structure of a Medusa backend. It’ll help you understand the purpose of each file and folder in your Medusa backend project.

|

||||

|

||||

---

|

||||

|

||||

## Root Files

|

||||

|

||||

These are files present at the root of your Medusa backend.

|

||||

|

||||

### .babelrc.js

|

||||

|

||||

Defines Babel’s configurations, which are used when running the `build` command that transpiles files from the `src` directory to the `dist` directory.

|

||||

|

||||

### .env

|

||||

|

||||

Includes the values of environment variables. This is typically only used in development. In production you should define environment variables based on your hosting provider.

|

||||

|

||||

### .env.template

|

||||

|

||||

Gives an example of what variables may be included in `.env`.

|

||||

|

||||

### .gitignore

|

||||

|

||||

Specifies files that shouldn’t be committed to a Git repository.

|

||||

|

||||

### .yarnrc.yml

|

||||

|

||||

Ensures dependencies are always installed in `node-modules`. This ensures compatibility with pnpm.

|

||||

|

||||

### index.js

|

||||

|

||||

Defines an entry file, which is useful when starting the Medusa backend with a process manager like pm2.

|

||||

|

||||

### medusa-config.js

|

||||

|

||||

Defines the Medusa backend’s configurations, including the database configurations, plugins used, modules used, and more.

|

||||

|

||||

**Read more:** [Medusa backend configurations](./configurations.md).

|

||||

|

||||

### package.json

|

||||

|

||||

Since the Medusa backend is an NPM package, this file defines its information as well as its dependencies. It will also include any new dependencies you install.

|

||||

|

||||

### README.md

|

||||

|

||||

Provides general information about the Medusa backend.

|

||||

|

||||

### tsconfig.admin.json

|

||||

|

||||

Defines the TypeScript configurations that are used to transpile admin customization files. So, it only works for files under the [src/admin directory](#admin).

|

||||

|

||||

### tsconfig.json

|

||||

|

||||

Defines the general TypeScript configurations used to transpile files from the `src` directory to the `dist` directory.

|

||||

|

||||

### tsconfig.server.json

|

||||

|

||||

Defines the TypeScript configurations that are used to transpile Medusa backend customization files. It works for all files except for the files under the `src/admin` directory.

|

||||

|

||||

### tsconfig.spec.json

|

||||

|

||||

Defines TypeScript configurations for test files. These are files that either reside under a `__tests__` directory under `src`, or that have a file name ending with one of the following:

|

||||

|

||||

- `.test.ts` or `.test.js`

|

||||

- `.spec.ts` or `.test.js`

|

||||

|

||||

### yarn.lock or package-lock.json

|

||||

|

||||

An automatically generated file by `yarn` or `npm` that holds the current versions of all dependencies installed to ensure the correct versions are always installed.

|

||||

|

||||

If you used the `create-medusa-app` command to install the Medusa backend, it’ll attempt to use `yarn` by default to install the dependencies. If `yarn` is not installed on your machine, it will then fall back to using `npm`.

|

||||

|

||||

Based on the package manager used to install the dependencies, either `yarn.lock` or `package-lock.json` will be available, or both.

|

||||

|

||||

---

|

||||

|

||||

## Root Directories

|

||||

|

||||

These are the directories present at the root of your Medusa backend.

|

||||

|

||||

### .cache

|

||||

|

||||

This directory will only be available if you have the Medusa admin installed and you’ve already started your Medusa backend at least once before. It holds all cached files related to building the Medusa admin assets.

|

||||

|

||||

### build

|

||||

|

||||

This directory will only be available if you have the Medusa admin installed and you’ve either built your admin files or ran the Medusa backend at least once before. It holds the built files that are used to serve the admin in your browser.

|

||||

|

||||

### data

|

||||

|

||||

This directory holds a JSON file used to seed your Medusa backend with dummy data which can be useful for demo purposes. The data is seeded automatically if you include the `--seed` option when using either the `create-medusa-app` or `medusa new` commands.

|

||||

|

||||

You can also seed the data by running the following command:

|

||||

|

||||

```bash npm2yarn

|

||||

npm run seed

|

||||

```

|

||||

|

||||

### dist

|

||||

|

||||

This directory holds the transpiled Medusa backend customizations. This directory may not be available when you first install the Medusa backend. It’ll be available when you run the `build` command or start your Medusa backend with the `dev` command.

|

||||

|

||||

The files under this directory are the files that are used in your Medusa backend. So, when you make any changes under `src`, make sure the changes are transpiled into the `dist` directory. If you’re using the `dev` or `medusa develop` commands, this is handled automatically whenever changes occur under the `src` directory.

|

||||

|

||||

### node_modules

|

||||

|

||||

This directory holds all installed dependencies in your project.

|

||||

|

||||

### src

|

||||

|

||||

This directory holds all Medusa backend and admin customizations. More details about each subdirectory are included in [this section](#src-subdirectories).

|

||||

|

||||

### uploads

|

||||

|

||||

This directory holds all file uploads to the Medusa backend. It’s only used if you’re using the [Local File Service plugin](../../plugins/file-service/local.md), which is installed by default.

|

||||

|

||||

---

|

||||

|

||||

## src Subdirectories

|

||||

|

||||

Files under the `src` directory hold the Medusa backend and admin customizations. These files should later be transpiled into the `dist` directory for them to be used during the backend’s runtime.

|

||||

|

||||

If any of these directories are not available, you can create them yourself.

|

||||

|

||||

### admin

|

||||

|

||||

This directory holds all Medusa admin customizations. The main subdirectories of this directory are:

|

||||

|

||||

- `widgets`: Holds all [Medusa admin widgets](../../admin/widgets.md).

|

||||

- `routes`: Holds all [Medusa admin UI routes](../../admin/routes.md).

|

||||

|

||||

### api

|

||||

|

||||

This directory holds all custom endpoints. You can create as many subdirectories and files that hold endpoint definitions, but only endpoints exported by the `index.ts` file are registered in the Medusa backend.

|

||||

|

||||

**Read more:** [Endpoints](../endpoints/overview.mdx)

|

||||

|

||||

### loaders

|

||||

|

||||

This directory holds scripts that run when the Medusa backend starts. For example, the scripts can define a scheduled job.

|

||||

|

||||

**Read more:** [Loaders](../loaders/overview.mdx)

|

||||

|

||||

### migrations

|

||||

|

||||

This directory holds all migration scripts that reflect changes on the database the Medusa backend is connected to.

|

||||

|

||||

**Read more:** [Migrations](../entities/migrations/overview.mdx)

|

||||

|

||||

### models

|

||||

|

||||

This directory holds all custom entities, which represent tables in your database. You can create a new entity, or customize a Medusa entity.

|

||||

|

||||

**Read more:** [Entities](../entities/overview.mdx)

|

||||

|

||||

### repositories

|

||||

|

||||

This directory holds all custom repositories which provide utility methods to access and modify data related to an entity.

|

||||

|

||||

**Read more:** [Repositories](../entities/overview.mdx#what-are-repositories)

|

||||

|

||||

### services

|

||||

|

||||

This directory holds all custom services. Services define utility methods related to an entity or feature that can be used across the Medusa backend’s resources.

|

||||

|

||||

**Read more**: [Services](../services/overview.mdx)

|

||||

|

||||

### subscribers

|

||||

|

||||

This directory holds all custom subscribers. Subscribers listen to emitted events and registers method to handle them.

|

||||

|

||||

**Read more:** [Subscribers](../events/subscribers.mdx)

|

||||

165

www/apps/docs/content/development/backend/install.mdx

Normal file

165

www/apps/docs/content/development/backend/install.mdx

Normal file

@@ -0,0 +1,165 @@

|

||||

---

|

||||

description: 'This quickstart guide will help you set up a Medusa backend in three steps.'

|

||||

addHowToData: true

|

||||

---

|

||||

|

||||

import Feedback from '@site/src/components/Feedback';

|

||||

import DocCardList from '@theme/DocCardList';

|

||||

import Icons from '@theme/Icon';

|

||||

import Troubleshooting from '@site/src/components/Troubleshooting'

|

||||

import SaslSection from '../../troubleshooting/database-errors/_sasl.md'

|

||||

import ConnectionErrorSection from '../../troubleshooting/database-errors/_connection-error.md'

|

||||

import FreshInstallationSection from '../../troubleshooting/awilix-resolution-error/_fresh-installation.md'

|

||||

import EaddrinuseSection from '../../troubleshooting/eaddrinuse.md'

|

||||

|

||||

# Install Medusa Backend

|

||||

|

||||

This document will guide you through setting up your Medusa backend in a three steps.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Before you can install and use Medusa, you need the following tools installed on your machine:

|

||||

|

||||

- [Node.js v16+](./prepare-environment.mdx#nodejs)

|

||||

- [Git](./prepare-environment.mdx#git)

|

||||

- [PostgreSQL](./prepare-environment.mdx#postgresql)

|

||||

|

||||

---

|

||||

|

||||

## Create a Medusa Backend

|

||||

|

||||

:::tip

|

||||

|

||||

It is recommended to use [Yarn](https://yarnpkg.com/getting-started/install) for the installation process as it's much faster than using NPM.

|

||||

|

||||

:::

|

||||

|

||||

### 1. Install Medusa CLI

|

||||

|

||||

To install the Medusa backend, you need Medusa's CLI tool. You can install it globally or, alternatively, use it through npx with `npx @medusajs/medusa-cli <command>`.

|

||||

|

||||

```bash npm2yarn

|

||||

npm install @medusajs/medusa-cli -g

|

||||

```

|

||||

|

||||

:::note

|

||||

|

||||

If you run into any errors while installing the CLI tool, check out the [troubleshooting guide](../../troubleshooting/cli-installation-errors.mdx).

|

||||

|

||||

:::

|

||||

|

||||

### 2. Create a new Medusa project

|

||||

|

||||

```bash noReport

|

||||

medusa new my-medusa-store # or npx @medusajs/medusa-cli new

|

||||

```

|

||||

|

||||

You'll then be asked to specify your PostgreSQL database credentials. You can choose "Continue" to use the default credentials shown in the terminal, choose "Change credentials" to specify your PostgreSQL credentails, or choose "Skip database setup" to create the database later.

|

||||

|

||||

:::warning

|

||||

|

||||

If you choose "Skip database setup" you will need to [set the database configurations](./configurations.md#database-configuration) and [run migrations](../entities/migrations/overview.mdx#migrate-command) later.

|

||||

|

||||

:::

|

||||

|

||||

### 3. Start your Medusa backend

|

||||

|

||||

:::note

|

||||

|

||||

Make sure your PostgreSQL server is running before you run the Medusa backend.

|

||||

|

||||

:::

|

||||

|

||||

```bash noReport

|

||||

cd my-medusa-store

|

||||

medusa develop # or npx medusa develop

|

||||

```

|

||||

|

||||

After these three steps and in only a couple of minutes, you now have a complete commerce engine running locally. You can test it out by sending a request using a tool like Postman or through the command line:

|

||||

|

||||

```bash noReport

|

||||

curl localhost:9000/store/products

|

||||

```

|

||||

|

||||

<Feedback

|

||||

event="survey_server_quickstart"

|

||||

question="Did you set up the backend successfully?"

|

||||

positiveQuestion="Is there anything that should improved?"

|

||||

negativeQuestion="Please describe the issue you faced."

|

||||

/>

|

||||

|

||||

---

|

||||

|

||||

## Troubleshooting Installation

|

||||

|

||||

<Troubleshooting

|

||||

sections={[

|

||||

{

|

||||

title: 'Error: SASL: SCRAM-SERVER-FIRST-MESSAGE: Client password must be a string',

|

||||

content: <SaslSection />

|

||||

},

|

||||

{

|

||||

title: 'Error: connect ECONNREFUSED ::1:5432',

|

||||

content: <ConnectionErrorSection />

|

||||

},

|

||||

{

|

||||

title: 'Error: EADDRINUSE',

|

||||

content: <EaddrinuseSection />

|

||||

},

|

||||

{

|

||||

title: 'AwilixResolutionError: Could Not Resolve X',

|

||||

content: <FreshInstallationSection />

|

||||

},

|

||||

]}

|

||||

/>

|

||||

|

||||

---

|

||||

|

||||

## Seed Data

|

||||

|

||||

For better testing, you can add demo data to your Medusa backend by running the seed command in your Medusa backend directory:

|

||||

|

||||

```bash

|

||||

medusa seed --seed-file=data/seed.json

|

||||

# or npx medusa seed --seed-file=data/seed.json

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Health Route

|

||||

|

||||

You can access `/health` to get health status of your backend.

|

||||

|

||||

---

|

||||

|

||||

## Next Steps

|

||||

|

||||

<DocCardList colSize={4} items={[

|

||||

{

|

||||

type: 'link',

|

||||

href: '/development/backend/directory-structure',

|

||||

label: 'Directory Structure',

|

||||

customProps: {

|

||||

icon: Icons['document-text-solid'],

|

||||

description: 'Learn about the purpose of each file and directory in the Medusa backend.'

|

||||

}

|

||||

},

|

||||

{

|

||||

type: 'link',

|

||||

href: '/development/backend/configurations',

|

||||

label: 'Backend Configurations',

|

||||

customProps: {

|

||||

icon: Icons['tools-solid'],

|

||||

description: 'Learn about configuring your backend and loading environment variables.'

|

||||

}

|

||||

},

|

||||

{

|

||||

type: 'link',

|

||||

href: '/development/fundamentals/architecture-overview',

|

||||

label: 'Backend Architecture',

|

||||

customProps: {

|

||||

icon: Icons['circle-stack-solid'],

|

||||

description: 'Learn about the different resources that your Medusa backend is made of.'

|

||||

}

|

||||

}

|

||||

]} />

|

||||

@@ -0,0 +1,177 @@

|

||||

---

|

||||

description: 'Learn how to prepare your development environment while using Medusa. This guide includes how to install Node.js, Git, Medusa CLI tool, and PostgreSQL.'

|

||||

---

|

||||

|

||||

import Tabs from '@theme/Tabs';

|

||||

import TabItem from '@theme/TabItem';

|

||||

import MedusaCliTroubleshootingSection from '../../troubleshooting/cli-installation-errors/_reusable-section.mdx'

|

||||

|

||||

# Prepare Development Environment

|

||||

|

||||

This document includes the installation instructions for the tools required to use and run Medusa.

|

||||

|

||||

## Node.js

|

||||

|

||||

Node.js is the environment that makes it possible for Medusa to run, so you must install Node.js on your machine to start Medusa development.

|

||||

|

||||

:::caution

|

||||

|

||||

Medusa supports v16+ of Node.js. You can check your Node.js version using the following command:

|

||||

|

||||

```bash noReport

|

||||

node -v

|

||||

```

|

||||

|

||||

:::

|

||||

|

||||

<Tabs groupId="operating-systems" queryString="os">

|

||||

<TabItem value="windows" label="Windows" default>

|

||||

|

||||

You can install the executable directly from [the Node.js website](https://nodejs.org/en/#home-downloadhead).

|

||||

|

||||

For other approaches, you can check out [Node.js’s guide](https://nodejs.org/en/download/package-manager/#windows-1).

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="linux" label="Linux">

|

||||

|

||||

You can use the following commands to install Node.js on Ubuntu:

|

||||

|

||||

```bash

|

||||

#Ubuntu

|

||||

sudo apt update

|

||||

sudo apt install nodejs

|

||||

```

|

||||

|

||||

For other Linux distributions, you can check out [Node.js’s guide](https://nodejs.org/en/download/package-manager/).

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="macos" label="macOS">

|

||||

|

||||

You can use the following commands to install Node.js on macOS:

|

||||

|

||||

<Tabs groupId="homebrew" isCodeTabs={true}>

|

||||

<TabItem value="homebrew" label="Homebrew">

|

||||

|

||||

```bash

|

||||

brew install node

|

||||

```

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="no-homebrew" label="Without Homebrew">

|

||||

|

||||

```bash

|

||||

curl \

|

||||

"https://nodejs.org/dist/latest/node-${VERSION:-$(wget -qO- \

|

||||

https://nodejs.org/dist/latest/ | sed -nE \

|

||||

's|.*>node-(.*)\.pkg</a>.*|\1|p')}.pkg" \

|

||||

> "$HOME/Downloads/node-latest.pkg" &&

|

||||

sudo installer -store -pkg "$HOME/Downloads/node-latest.pkg" -target "/"

|

||||

```

|

||||

|

||||

</TabItem>

|

||||

</Tabs>

|

||||

|

||||

For other approaches, you can check out [Node.js’s guide](https://nodejs.org/en/download/package-manager/#macos).

|

||||

|

||||

:::tip

|

||||

|

||||

Make sure that you have Xcode command line tools installed; if not, run the following command to install it: `xcode-select --install`

|

||||

|

||||

:::

|

||||

</TabItem>

|

||||

</Tabs>

|

||||

|

||||

## Git

|

||||

|

||||

Medusa uses Git behind the scenes when you create a new project. So, you'll have to install it on your machine to get started.

|

||||

|

||||

<Tabs groupId="operating-systems" queryString="os">

|

||||

<TabItem value="windows" label="Windows" default>

|

||||

|

||||

To install Git on Windows, you need to [download the installable package](https://git-scm.com/download/win).

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="linux" label="Linux">

|

||||

|

||||

For Debian/Ubuntu, you can use the following command:

|

||||

|

||||

```bash

|

||||

apt-get install git

|

||||

```

|

||||

|

||||

As for other Linux distributions, please check [git’s guide](https://git-scm.com/download/linux).

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="macos" label="macOS">

|

||||

|

||||

You should already have Git installed as part of the Xcode command-line tools.

|

||||

|

||||

However, if for any reason you need to install it manually, you can install it with Homebrew:

|

||||

|

||||

```bash

|

||||

brew install git

|

||||

```

|

||||

|

||||

You can also check out [git’s guide](https://git-scm.com/download/mac) for more installation options.

|

||||

|

||||

</TabItem>

|

||||

</Tabs>

|

||||

|

||||

## PostgreSQL

|

||||

|

||||

The Medusa backend uses PostgreSQL to store data of your commerce system.

|

||||

|

||||

<Tabs groupId="operating-systems" queryString="os">

|

||||

<TabItem value="windows" label="Windows">

|

||||

|

||||

You can [download the PostgreSQL Windows installer](https://www.postgresql.org/download/windows/) from their website.

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="linux" label="Linux">

|

||||

|

||||

If you’re using Ubuntu, you can use the following commands to download and install PostgreSQL:

|

||||

|

||||

```bash

|

||||

sudo sh -c \

|

||||

'echo "deb http://apt.postgresql.org/pub/repos/apt $(lsb_release -cs)-pgdg main" > /etc/apt/sources.list.d/pgdg.list'

|

||||

wget --quiet -O - \

|

||||

https://www.postgresql.org/media/keys/ACCC4CF8.asc | sudo apt-key add -

|

||||

sudo apt-get update

|

||||

sudo apt-get -y install postgresql

|

||||

```

|

||||

|

||||

For other distributions, you can check out [PostgreSQL’s website for more guides](https://www.postgresql.org/download/linux/).

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="macos" label="macOS">

|

||||

|

||||

You can download PostgreSQL on your macOS using [the installer on their website](https://www.postgresql.org/download/macosx/).

|

||||

|

||||

</TabItem>

|

||||

</Tabs>

|

||||

|

||||

## (Optional) Medusa CLI

|

||||

|

||||

Medusa provides a CLI tool that can aid your through your Medusa development. You can install it globally, or you can use it through `npx`.

|

||||

|

||||

You can install Medusa’s CLI with the following command:

|

||||

|

||||

```bash npm2yarn

|

||||

npm install @medusajs/medusa-cli -g

|

||||

```

|

||||

|

||||

To confirm that the CLI tool was installed successfully, run the following command:

|

||||

|

||||

```bash noReport

|

||||

medusa -v

|

||||

```

|

||||

|

||||

### Troubleshooting Installation

|

||||

|

||||

<MedusaCliTroubleshootingSection />

|

||||

|

||||

---

|

||||

|

||||

## Install Medusa Backend

|

||||

|

||||

Once you're done installing the necessary tools in your environment, check out the [Medusa Backend Quickstart](./install.mdx) to install your Medusa backend.

|

||||

449

www/apps/docs/content/development/batch-jobs/create.mdx

Normal file

449

www/apps/docs/content/development/batch-jobs/create.mdx

Normal file

@@ -0,0 +1,449 @@

|

||||

---

|

||||

description: 'Learn how to create a batch job strategy in Medusa. This guide also includes how to test your batch job strategy.'

|

||||

addHowToData: true

|

||||

---

|

||||

|

||||

import Tabs from '@theme/Tabs';

|

||||

import TabItem from '@theme/TabItem';

|

||||

|

||||

# Create a Batch Job Strategy

|

||||

|

||||

In this document, you’ll learn how to create a batch job strategy in Medusa.

|

||||

|

||||

:::info

|

||||

|

||||

If you’re interested to learn more about what Batch Jobs are and how they work, check out [this documentation](./index.mdx).

|

||||

|

||||

:::

|

||||

|

||||

## Overview

|

||||

|

||||

Batch jobs can be used to perform long tasks in the background of your Medusa backend. Batch jobs are handled by batch job strategies. An example of a batch job strategy is the Import Products functionality.

|

||||

|

||||

This documentation helps you learn how to create a batch job strategy. The batch job strategy used in this example changes the status of all draft products to `published`.

|

||||

|

||||

---

|

||||

|

||||

## Prerequisites

|

||||

|

||||

### Medusa Components

|

||||

|

||||

It is assumed that you already have a Medusa backend installed and set up. If not, you can follow our [quickstart guide](../backend/install.mdx) to get started. The Medusa backend must also have an event bus module installed, which is available when using the default Medusa backend starter.

|

||||

|

||||

---

|

||||

|

||||

## 1. Create a File

|

||||

|

||||

A batch job strategy is essentially a class defined in a TypeScript or JavaScript file. You should create this file in `src/strategies`.

|

||||

|

||||

Following the example used in this documentation, create the file `src/strategies/publish.ts`.

|

||||

|

||||

---

|

||||

|

||||

## 2. Create Class

|

||||

|

||||

Batch job strategies must extend the abstract class `AbstractBatchJobStrategy` and implement its abstract methods.

|

||||

|

||||

Add the following content to the file you created:

|

||||

|

||||

```ts title=src/strategies/publish.ts

|

||||

import {

|

||||

AbstractBatchJobStrategy,

|

||||

BatchJobService,

|

||||

} from "@medusajs/medusa"

|

||||

import { EntityManager } from "typeorm"

|

||||

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

protected batchJobService_: BatchJobService

|

||||

processJob(batchJobId: string): Promise<void> {

|

||||

throw new Error("Method not implemented.")

|

||||

}

|

||||

buildTemplate(): Promise<string> {

|

||||

throw new Error("Method not implemented.")

|

||||

}

|

||||

protected manager_: EntityManager

|

||||

protected transactionManager_: EntityManager

|

||||

|

||||

}

|

||||

|

||||

export default PublishStrategy

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 3. Define Required Properties

|

||||

|

||||

A batch job strategy class must have two static properties: the `identifier` and `batchType` properties. The `identifier` must be a unique string associated with your batch job strategy, and `batchType` must be the batch job's type.

|

||||

|

||||

You will use the `batchType` later when you [interact with the Batch Job APIs](#test-your-batch-job-strategy).

|

||||

|

||||

Following the same example, add the following properties to the `PublishStrategy` class:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

static identifier = "publish-products-strategy"

|

||||

static batchType = "publish-products"

|

||||

|

||||

// ...

|

||||

}

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 4. Define Methods

|

||||

|

||||

### (Optional) prepareBatchJobForProcessing

|

||||

|

||||

Medusa runs this method before it creates the batch job to prepare the content of the batch job record in the database. It accepts two parameters: the batch job data sent in the body of the [Create Batch Job request](https://docs.medusajs.com/api/admin#batch-jobs_postbatchjobs), and the request instance.

|

||||

|

||||

Implementing this method is optional. For example:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

// ...

|

||||

async prepareBatchJobForProcessing(

|

||||

batchJob: CreateBatchJobInput,

|

||||

req: Express.Request

|

||||

): Promise<CreateBatchJobInput> {

|

||||

// make changes to the batch job's fields...

|

||||

return batchJob

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### (Optional) preProcessBatchJob

|

||||

|

||||

Medusa runs this method after it creates the batch job, but before it is confirmed and processed. You can use this method to perform any necessary action before the batch job is processed. You can also use this method to add information related to the expected result.

|

||||

|

||||

For example, this implementation of the `preProcessBatchJob` method calculates how many draft products it will published and adds it to the `result` attribute of the batch job:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

// ...

|

||||

async preProcessBatchJob(batchJobId: string): Promise<void> {

|

||||

return await this.atomicPhase_(

|

||||

async (transactionManager) => {

|

||||

const batchJob = (await this.batchJobService_

|

||||

.withTransaction(transactionManager)

|

||||

.retrieve(batchJobId))

|

||||

|

||||

const count = await this.productService_

|

||||

.withTransaction(transactionManager)

|

||||

.count({

|

||||

status: ProductStatus.DRAFT,

|

||||

})

|

||||

|

||||

await this.batchJobService_

|

||||

.withTransaction(transactionManager)

|

||||

.update(batchJob, {

|

||||

result: {

|

||||

advancement_count: 0,

|

||||

count,

|

||||

stat_descriptors: [

|

||||

{

|

||||

key: "product-publish-count",

|

||||

name: "Number of products to publish",

|

||||

message:

|

||||

`${count} product(s) will be published.`,

|

||||

},

|

||||

],

|

||||

},

|

||||

})

|

||||

})

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

The `result` attribute is an object that can hold many properties including:

|

||||

|

||||

- `count`: used to indicate how many items (in this case, products) that the task will run on.

|

||||

- `advancement_count`: used to indicate the current number of items processed at a given moment. Since the batch job isn't processed yet, you set it to zero.

|

||||

- `stat_descriptors`: can be used to show human-readable messages.

|

||||

|

||||

### processJob

|

||||

|

||||

Medusa runs this method to process the batch job once it is confirmed.

|

||||

|

||||

For example, this implementation of the `processJob` method retrieves all draft products and changes their status to published:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

// ...

|

||||

async processJob(batchJobId: string): Promise<void> {

|

||||

return await this.atomicPhase_(

|

||||

async (transactionManager) => {

|

||||

const productServiceTx = this.productService_

|

||||

.withTransaction(transactionManager)

|

||||

|

||||

const productList = await productServiceTx

|

||||

.list({

|

||||

status: [ProductStatus.DRAFT],

|

||||

})

|

||||

|

||||

productList.forEach(async (product: Product) => {

|

||||

await productServiceTx

|

||||

.update(product.id, {

|

||||

status: ProductStatus.PUBLISHED,

|

||||

})

|

||||

})

|

||||

|

||||

await this.batchJobService_

|

||||

.withTransaction(transactionManager)

|

||||

.update(batchJobId, {

|

||||

result: {

|

||||

advancement_count: productList.length,

|

||||

},

|

||||

})

|

||||

}

|

||||

)

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

:::note

|

||||

|

||||

When a batch job is canceled, the processing of the batch job doesn’t automatically stop. You will have to manually check for changes in the status of the batch job. For example, you can retrieve the batch job and use the condition `batchJob.status === BatchJobStatus.CANCELED` to check if the batch job was canceled.

|

||||

|

||||

:::

|

||||

|

||||

### buildTemplate

|

||||

|

||||

This method can be used in cases where you provide a template file to download, such as when implementing an import or export functionality.

|

||||

|

||||

If not necessary to your use case, you can simply return an empty string:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

// ...

|

||||

async buildTemplate(): Promise<string> {

|

||||

return ""

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### (Optional) shouldRetryOnProcessingError

|

||||

|

||||

Medusa uses this method to decide whether it should retry the batch job if an error occurs during processing.

|

||||

|

||||

By default, the `AbstractBatchJobStrategy` class implements this method and returns `false`, indicating that if a batch job’s process fails it will not be retried.

|

||||

|

||||

If you would like to change that behavior, you can override this method to return a different value:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

// ...

|

||||

protected async shouldRetryOnProcessingError(

|

||||

batchJob: BatchJob,

|

||||

err: unknown

|

||||

): Promise<boolean> {

|

||||

return true

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### (Optional) handleProcessingError

|

||||

|

||||

Medusa uses this method to handle errors that occur during processing. By default, it changes the status of the batch job to `failed` and sets the `errors` property of the batch job’s `result` attribute.

|

||||

|

||||

You can use this method as implemented in `AbstractBatchJobStrategy` at any point in your batch job process to set the batch job as failed.

|

||||

|

||||

You can also override this method in your batch job strategy and change how it works:

|

||||

|

||||

```ts

|

||||

class PublishStrategy extends AbstractBatchJobStrategy {

|

||||

// ...

|

||||

protected async handleProcessingError<T>(

|

||||

batchJobId: string,

|

||||

err: unknown,

|

||||

result: T

|

||||

): Promise<void> {

|

||||

// different implementation...

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 5. Run Build Command

|

||||

|

||||

After you create the batch job and before testing it out, you must run the build command in the directory of your Medusa backend:

|

||||

|

||||

```bash npm2yarn

|

||||

npm run build

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Test your Batch Job Strategy

|

||||

|

||||

This section covers how to test and use your batch job strategy. Make sure to start your backend first:

|

||||

|

||||

```bash

|

||||

npx medusa develop

|

||||

```

|

||||

|

||||

You must also use an authenticated user to send batch job requests. You can refer to the [authentication guide in the API reference](https://docs.medusajs.com/api/admin#authentication) for more details.

|

||||

|

||||

If you follow along with the JS Client code snippets, make sure to [install and set it up first](../../js-client/overview.md).

|

||||

|

||||

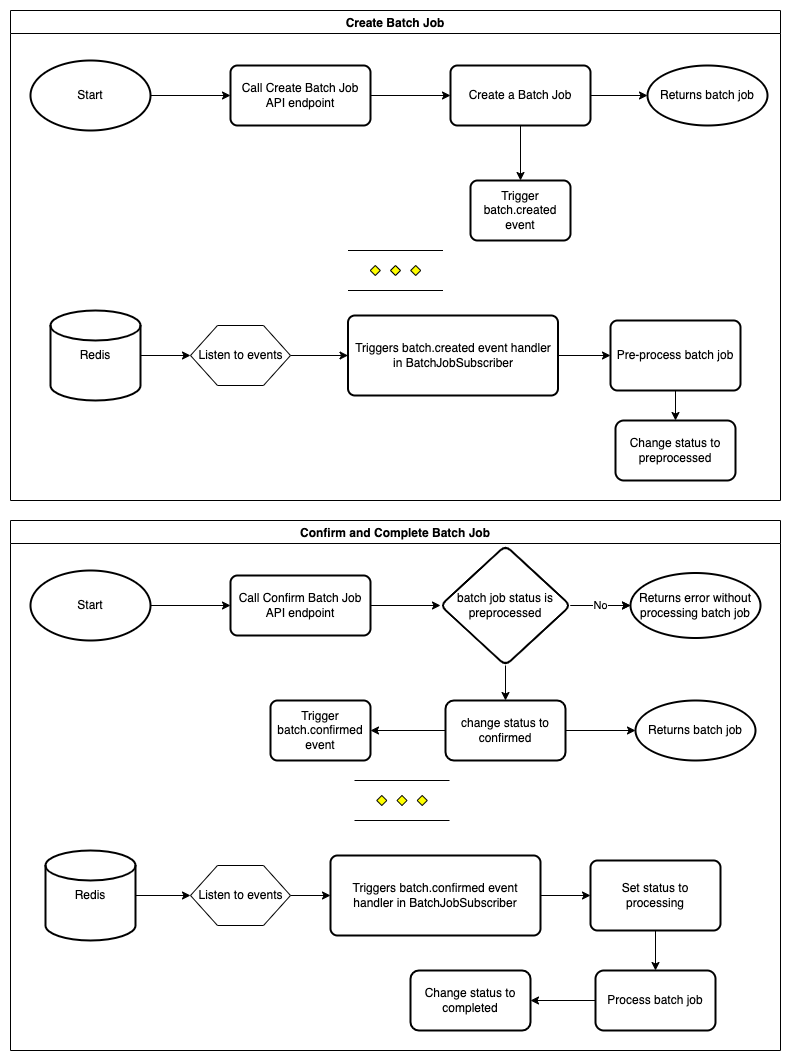

### Create Batch Job

|

||||

|

||||

The first step is to create a batch job using the [Create Batch Job endpoint](https://docs.medusajs.com/api/admin#batch-jobs_postbatchjobs). In the body of the request, you must set the `type` to the value of `batchType` in the batch job strategy you created.

|

||||

|

||||

For example, this creates a batch job of the type `publish-products`:

|

||||

|

||||

<Tabs groupId="request-types" isCodeTabs={true}>

|

||||

<TabItem value="client" label="Medusa JS Client" default>

|

||||

|

||||

```jsx

|

||||

medusa.admin.batchJobs.create({

|

||||

type: "publish-products",

|

||||

context: { },

|

||||

dry_run: true,

|

||||

})

|

||||

.then(( batch_job ) => {

|

||||

console.log(batch_job.status)

|

||||

})

|

||||

```

|

||||

|

||||

</TabItem>

|

||||

<TabItem value="fetch" label="Fetch API">

|

||||

|

||||

```jsx

|

||||

fetch(`<BACKEND_URL>/admin/batch-jobs`, {

|

||||

method: "POST",

|

||||

credentials: "include",

|

||||

headers: {

|

||||

"Content-Type": "application/json",

|

||||

},

|

||||

body: JSON.stringify({

|

||||

type: "publish-products",

|

||||

context: { },

|

||||

dry_run: true,

|

||||

}),

|

||||

})

|

||||

.then((response) => response.json())

|

||||

.then(({ batch_job }) => {

|

||||

console.log(batch_job.status)

|

||||